Mind Crunches #20: Preparing For Liftoff!

Alternatively: You get an LLM, and you get an LLM. Everyone gets an LLM! *oprah winfrey gif*

✍🏽 Reflections On Generative AI

On AI FOMO: The pace of AI innovation is so fast that I am sure we haven’t witnessed anything like it in the past. The most amazing part is that there is always something new in all levels: Scientific (new papers), Business (new startups), Tech (open-source LLMs, new features). There are many people, myself included, that have a huge FOMO and try to keep up with the latest and greatest on AI but of course this isn’t possible. Or at least it’s impossible if you have a day job and a basic social life. (disclaimer: I feel truly lucky that part of my actual job is to stay up to speed with AI technology advancements). Lists are always a good place to start if you want to stay up to speed. The following list of people has personally helped me a lot to keep track of the most interesting things happening in AI and I hope it will help you too: Mckay Wrigley, Greg Brockman, Sebastien Bubeck, Andrej Karpathy, Jude Gomila, Nick St. Pierre, Harrison Chase, John Nay, Aran Komatsuzaki, Ethan Mollick, Jim Fan, Linus Ekenstam, Emad Mostaque. You are welcome!

On AI Safety/Doomerism: one of the most polarizing debates in the intellectual elites circle is, in a nutshell, whether AI will kill all humans. From a first look, this might be a very straightforward debate but it’s not. It’s complex and multi-layered (technology, philosophy, policymaking) and it requires open discussions, intellectual humility and bold decisions if we want to make the most out of this amazing technology. I have been reading, thinking and discussing about AI safety a lot and I must admit that I have found very plausible and convincing arguments from both camps. Zvi Mowshowith, Scott Alexander and Eliezer Yudksowsky have some really great posts on the risks of advanced AI but the same applies to Tyler Cowen who makes the case for an “inevitable future”. I am still actively reading and educating myself more in order to take an informed position in this debate but, as of today, I’d put myself in the “moderately tech optimist” camp. What does this mean? It means that I genuinely believe (I understand that this word has some % of metaphysical in it and I am perfectly fine with it) that the benefits from Generative AI will dramatically outweigh the risks and that we neither can nor should “pause” the pace of AI innovation. At the same time, this doesn’t mean that we should treat AI exactly as any other technology and we should be complacent on how to properly manage it in a policymaking level. This is a truly transformative technology and I am certain that the % of AI becoming our overlord in not zero. Having said that, this is where the whole debate becomes tricky. Which institution has the expertise to regulate such a world-changing technology? Should we trust government agencies to wisely decide on such matters? I personally don’t. So what should we do? I’d suggest that we should (of course) let the tech industry to keep building/innovating and at the same time form some sort of a universal organization (a la United Nations) comprised of scientists, philosophers, technologists that could set global standards, code of ethics, responsible use frameworks etc. The older I get, the closer I come to the “action first” mindset that I had presented at my #18 blogpost. (excerpt below)

On Action-First Worlds: Something I have been thinking about a lot lately is the balance between Active-Proactive-Reactive and how it shapes the world we live in. A useful analogy is: a) Active=Entrepreneur, b) Proactive=Thinker, c) Reactive=Politician/Regulator. With that in mind, for almost all emerging technologies (AGI, Crypto, Bioscience) you need a healthy balance of a+b BEFORE major innovations are released but that’s rarely achievable. One reason is misalignment of human incentives but philosophically a more important reason is because it’s extremely hard to test theoretical hypotheses. Hypotheses are not good at calculating infinite possibilities that only actions can generate. That became obvious to me while watching “We Are As Gods”, a documentary about Stewart Brand’s vision to bring back the mammoth. I believe that de-extinction has great risks but I also believe that it has much bigger upside. The same applies for AI, crypto, space exploration. We should always push for more “action” because teleologically this is the only way we can move forward. The golden balance would be to find a tested method to do “pre-mortems” that would actually accelerate innovation. Virtual environments could enable us launch pre-mortem worlds. Until then, we should become much smarter when it comes to regulation. Tomicah Tillemann’s framework is a good start on how to distinguish between punitive enforcement regulation and consultative rulemaking.

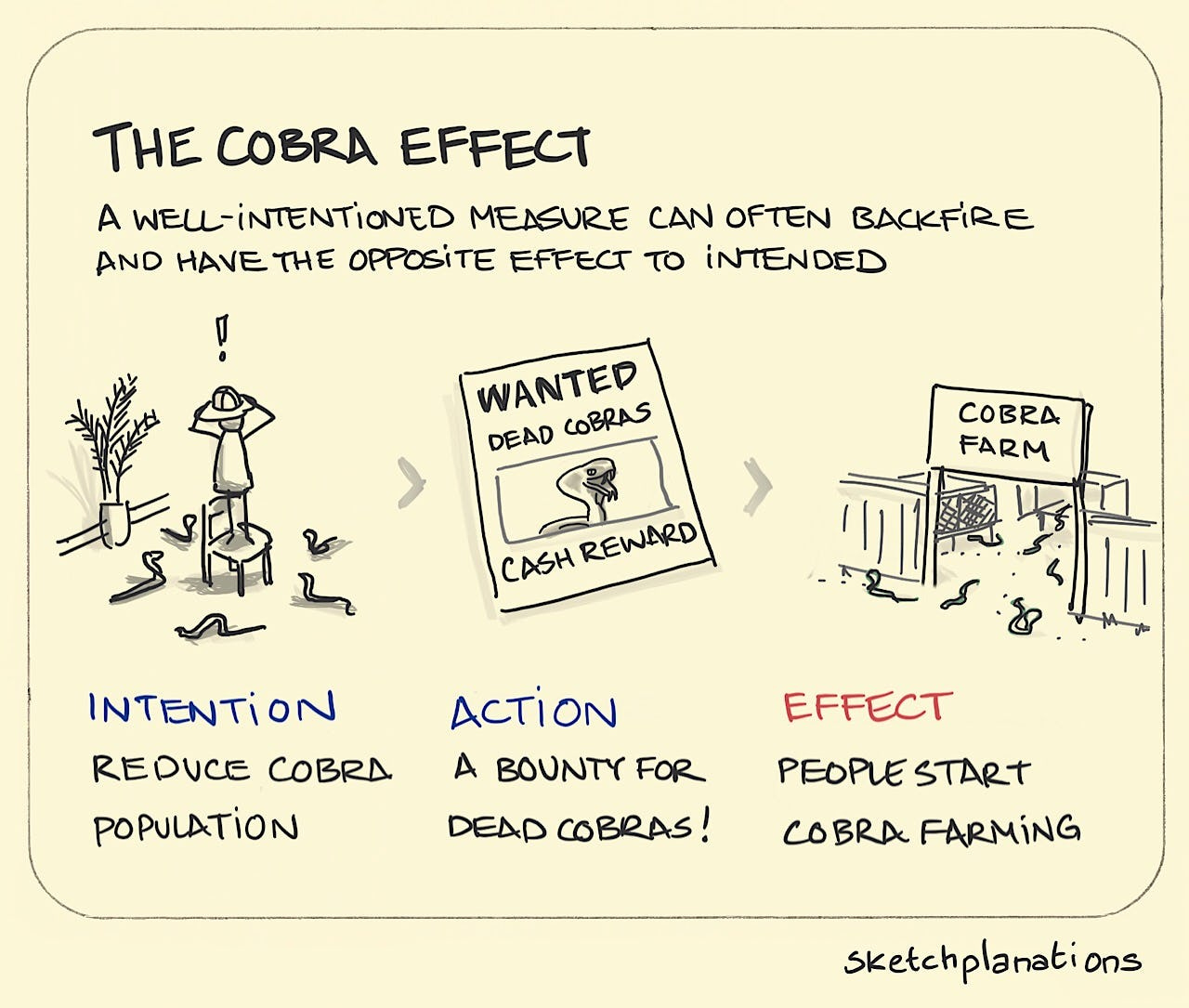

On the Law of Unintended Consequences: double-clicking on the regulatory part of the AI debate, another reason (on top of their lack of specialized knowledge) I am very skeptical for governments/institutions/agencies to regulate AI is that they will generate negative consequences we can’t even predict. This is based on the popular law of unintended consequences which says that the actions of governments always have effects that are unanticipated or unintended. For example, Italy banning GPT-4 , except from super stupid, is riskier compared to just allowing people to use it. It stifles innovation and incentivizes “rogue actors” to propose other LLMs that may be less open and transparent. The Cobra Effect in its full glory!

On Plurality: as of today, the only framework/initiative/project on how to build more transparent and socially diverse technologies that I have found to be really thorough and well-researched is Plurality. It’s a multi-disciplinary field of research focusing on governance of emerging technologies, the right use of Foundation Models and decentralized social technologies. It is led by Glen Weyl, a colleague of mine at Microsoft, an inspiring thinker and an amazing human being. I highly encourage you reading their latest papers and announcements.

On AI + Web3: if you have been following my blogposts, you will know that I am a big advocate of multi-disciplinary thinking and consilience. I strongly believe that innovation is (many times) random and amazing things happen when you mix (seemingly) different technologies together. In that sense, I am very happy to see that it’s now part of the mainstream consensus that AI and blockchain technologies are perfectly complementing and they bring utility & scalability to each other. People now realize that the upcoming flood of “synthetic” content (text, audio, image, code) will create challenges on ownership, authenticity and provenance that only blockchain and zero-knowledge proof technologies can address. a16z post on ML and ZKP, Worldcoin’s “Humanness” announcement and startups like Alethea are great starting points if you want to scratch the surface of AI+Web3. Exciting times ahead! Bonus: what I was writing about Generative AI + Blockchain on December and February.

On M2M Marketing: we have spent the last decades dividing the “science” of Marketing in B2C and B2B. First of all, I always thought these terms were a bit narrow and were not reflecting how the world has been changing the past years. But my main objection with these terms was actually philosophical. By grouping all transactions in the buckets of “consumer” and “business”, we stripped out the fundamental truth that at the end of the day there were humans involved at both sides of the equation. Someone was always selling something (product, services) to another person. Yes, maybe the context changed depending on whether you are buying a cup of coffee vs enterprise software but the universal rules applied to both scenarios (price sensitivity, outcomes, utility etc). However, we are now entering an era when it’s very likely that two LLMs (machines) will be transacting with each other. An LLM will be creating marketing material (emails, presentations, ads, visuals) to convince another LLM (trained on company’s data finetuned to specific needs and available marketing budget) to buy something. Someone could argue that it’s still humans that have made the decision to train the LLMs and adjusted them accordingly but you get my point. Machine to Machine marketing is here!

On Horizontal vs Vertical Businesses: the main theme of my #15 blogpost was that the lines between industries become very blurry and that most companies are basically “consumers/employee experience providers”. We used to categorize companies in distinct verticals. Walmart was retail. HSBC was banking. Disney was entertainment. Apple was (mostly) devices. The past years these lines faded. Which industry would you say that Starbucks belongs to? I have no idea. One of the main reasons that this happened is that every company actually became a digital company. They all used digital technologies to enter new fields, diversify their strategy and (some of them) build moats. The enterprise software market was shaped accordingly. For example, most CSPs offer the same services to all customers finetuning them only for some special industry applications like manufacturing or healthcare. One thing that might change this trend is industry/company-specific LLMs. If CVS, Wells Fargo, Nike train LLMs on their proprietary data (which they will surely do), this will make them much more focused on specific parts of their core business and they will just infuse some basic intelligence/reasoning horizontally in their various LoBs (marketing, HR, sales, finance).

📌 Mind Crunches On Generative AI

Stephen Wolfram is a global treasure. His (long) post on how ChatGPT actually works is great.

Fascinating paper on AI Darwinism. In a nutshell, the authors argue that AI agents may be better than humans on the game of natural selection!

One of the first and most comprehensive research papers about the impact of AI in Medicine. Written by the excellent Peter Lee.

Microsoft Research scientists issued a report arguing that GPT-4 shows early signs of AGI. I am not qualified enough to assess this paper but the fact that I have the utmost respect for Microsoft Research stresses me out.

Every day, a business model or business moat that has existed for decades in tech becomes obsolete (or less relevant) due to the pace of AI. If you think about it, popular business models like Platforms, Marketplaces, SaaS will be redefined because the dynamics have completely shifted. For example, GPT-plugins is a strategic chess move from Open AI that probably killed hundreds of businesses in a second. Databricks releasing Dolly and showing that you can build GPT-like capabilities with little training on one machine is mind-blowing. How do you position vs great open-source LLMs like LLaMA? I am certain that in the next 1-2 years, we will see some new business models and forms of business partnership to emerge. It’s a 4D chess game!

Essay on the impact of Generative AI in developing economies. tldr: the potential is very promising.

Biomimicry logic on LLMs.

Elad Gil on how the AI market will be shaped. Super insightful.

We are pretty good at testing hypotheses but what about creating new ones? New report on ML generated hypotheses.

Mind Blown: SudoLand is a pseudocode language created by LLMs to program LLMs.

If you believe that the field of AI Alignment is super advanced with thousands of brilliant scientists working on it, as I did, maybe you should reconsider.

💡Non-AI Mind Crunches

New research shows that plants emit ultrasonic airborne sounds when stressed. This makes absolute sense and aligns with what I have previously written about Umwelt and biomimicry. We need a PlantGPT.

The new Economic Report of the President, issued by the White House, is a very interesting read (especially if you are working in web3). I don’t remember having read in the past such a obsessive bias contra a set of technologies, in that case blockchain. Bonus: Katie Haun’s article in WSJ about Operation Chokepoint 2.0. Addendum: It’s clear the SEC wants to kill crypto. Have you seen something more embarrassing than Gensler’s testimony in Congress?

Declining birth rates is a much more frightening scenario that AI doomerism. Palladium argues that the way to encourage higher fertility is to inspire a “better culture”. Overall, when it comes to global-scale problems we tend to ignore culture-based solutions favoring technology and/or policy approaches.

Ben Evans’ decks are the best.

Stripe’s business report is an amazing read about the Internet economy all up.

Not every innovation is an AI Innovation. Varda manufactures novel pharmaceuticals in space and sends them back to Earth in space capsules. Humans are amazing!

I have written many times in the past about the power of the “O’Ring Problem” concept. Adam Mastroianni perfectly captures the essence of this concept is his latest post about Science being a strong a strong-link problem.

The age of average. Absolutely true and a good wake-up call for everyone. Bonus: this post reminded me of the famous Whither Tartaria post!

Dan Wang’s annual letters are always must read. The 20222 recap is especially interesting because he shares details about daily life in China during Covid.

We could be flying from LA to NY in 90 minutes but regulation has banned supersonic flights to control airplane noise. Again, remember the law of unintended consequences.

Fascinating read on how top scientists and Nobel laureates make mistakes on how to clearly communicate their findings. In that case, Kahneman, among others, failed to properly explain findings about the correlation of income and happiness.

Alexey Guzey’s lifehacks are **chef’s kiss**

Recommended book: Isonomia and the Origins of Philosophy, Kojin Karatani.

Recommended podcast: Manolis Kellis on Lex Fridman. Top quality!

Recommended Newsletter: Glasswing, by Shrey Jain

Quote of the month: “The love of complexity without reductionism makes art; the love of complexity with reductionism makes science” Edward O.Wilson

Photo of the month: People reacting to Starship’s inaugural flight!